Vendors tout the potential, but responsibility remains unclear

Feature "You can't blame it on the box," says the boss of a UK financial regulator.

What about the people who sold you the box?

Good luck with that, says a global tech analyst.

When AI agents...

are considered to operate on behalf of an organization, decision-making risk becomes ambiguous and unpredictable.

It also signals AI risk redistribution with unknown parameters

With AI agents now promising to "actively run the business," anyone looking for an explanation of who might take responsibility for the output of the supposedly world-conquering statistical machines might arrive at the paragraph above, not unreasonably.

While tech suppliers eye a trillion-dollar opportunity in AI, who carries the can if it goes wrong?

"There's a historic assumption that the vendor will be picking up liability if the thing is going to go wrong.

That's the point of origin for more or less all of these discussions," said Malcolm Dowden, senior technology lawyer at Pinsent Masons.

Users might be forgiven for having high expectations for AI, given the vendors' claims.

Announcing an expansion of its AI Agent Studio for Fusion Applications, Oracle said the technology would be "capable of reasoning, taking action across business systems, and continuously executing processes" such that its software could "actively run the business, with the governance, trust, and security that enterprises require."

In legal terms, though, vendors might see things differently.

Dowden said: "If you think of a normal tool or system, its behavior is predictable, so the giver of a warranty can have some pretty clear sense of how much liability you're taking on.

That's different with AI.

The more we get down the chain to what used to be called non-deterministic AI – mostly what falls into that agentic AI category – that gives a much greater scope for unexpected behaviors.

That's the big concern from a vendor perspective, if you're giving a warranty about how something will behave, but it's inherently unpredictable, then that makes it a very uncomfortable contractual promise to make."

It might also be concerning for the businesses using these systems, given what is at stake and the responsibilities they are expected to take.

For example, in the UK this week, the Financial Reporting Council (FRC) could not have been clearer in its guidance for AI adoption.

"While technology changes, the fundamental principle of our regulatory framework does not: it is people – the firms and Responsible Individuals – who are accountable for audit quality."

Or as FRC executive director Mark Babington told the Financial Times : "You can't blame it on the box.

If you use this technology, you are still accountable for it."

Nonetheless, technology buyers can at least try to hold their suppliers to account in the terms of the contract.

For example, users deploying AI to screen job applications should be aware that they could be challenged under data protection law because it is automated decision-making.

The UK's enforcer, the Information Commissioner's Office, has recently said it backs automation so long as users monitor for bias, are transparent with job seekers and explain their right to recourse.

Dowden said on questions such as bias in the training model, user organizations would be liable as they are data controllers under UK law.

"They would then be looking to lay off that liability on the vendor through contractual provisions about explaining how the AI works, or a contractual obligation to make sure there is no inherent bias."

However, vendors are very likely to push back on a straightforward assertion that the bias must be in the model itself, he said.

They will want to look at the interaction between the model, the algorithm and the user prompts.

"We're seeing in terms of negotiated warranties things like a promise that the system has been tested for bias, and the test will be regularly updated, and the models will be calibrated, but no assumption of responsibility if the bias can be traced to the way in which the prompts have been created and formulated.

Both sides are essentially looking to establish the other as the liable party.

That's where negotiations are tending to focus," Dowden said.

Gartner has predicted that by mid-2026, new categories of unlawful AI-informed decision-making will generate more than $10 billion in remediation costs across global AI vendors and enterprises that leverage AI.

Lydia Clougherty Jones, Gartner VP analyst, said decision-making by AI agents may take AI liability to a new level.

"Organizations that fail to immediately adopt defensible AI, make AI-ready data 'AI-decision-making ready' and extensively overhaul ML model explainability are at risk of significant loss of investment, government investigations, civil penalties and, in some cases, criminal liability."

Clougherty Jones recommended that users should get to grips with the idea of "defensible AI." That means focusing on techniques, including AI decision-making, "that can reliably and repeatedly withstand scrutiny, questioning, and examination."

Organizations might want to deploy content and decision-making guardrails for language-model-based solutions across the entire life cycle of AI from data to model to output, she said.

Last week, Balaji Abbabatulla, Gartner vice president and lead analyst for Oracle, said there was a lot of legal language to protect the vendors in terms of technology.

Instead of being legally liable, they talk about monitoring, observability and audits.

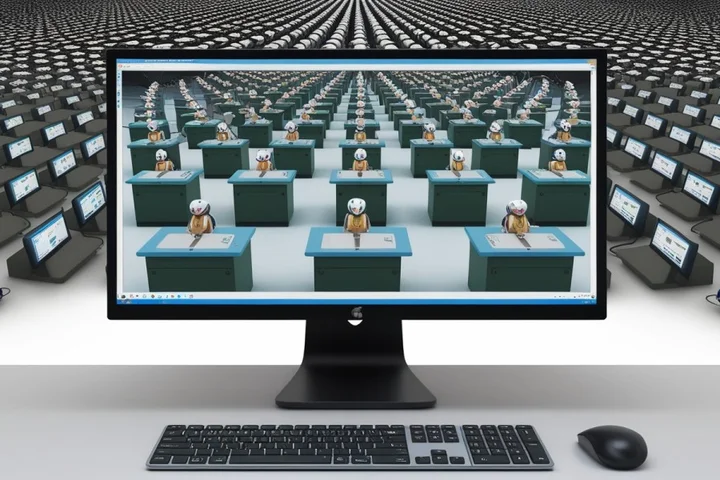

"The difference between AI agent decisions and human decisions is the scale and the pace of those decisions, and they could quickly cascade," he said.

"If there's something wrong and if it's not identified and prevented, then it could quickly cascade before anybody even takes note of the issue.

They're talking about continuous monitoring to identify exceptions: guardian agents, as we call them.

But the issue around liability is the key challenge for all vendors."

It was precisely the risk of erroneous output cascading unnoticed that worried vendors about accepting liability, said Georgina Kon, Linklaters partner in digital, data and commercial law.

"The magnification risk is massive but also there is the difficulty in working out who is responsible," Kon said.

"A lot of the current laws don't really lend themselves particularly easily, because it assumes always that a human or company is doing something and that's not true.

But you can't also have a world where people are creating agents and not liable for them.

What it comes down to is what the market can bear commercially."

For this reason, the vendors were soft-launching products and testing them out with users first.

As with social media in the early part of the century, the way people will deploy and respond to AI agents is yet to play out, Kon said.

"When you have things like AI, it's just another crest of a hill where you have no idea what's ahead of you, because these agents could be unexpected, they could learn the wrong thing and well.

No wonder vendors won't take responsibility for everything, but what they can take responsibility for are the processes they followed, and the safeguards that they have implemented.

From a profitability perspective, there will come a point where it's not attractive for them to develop agents that they then might have typical contractual liability for."

However, some users were happy to go ahead and deploy agents so they can stay on the bleeding edge of their market or gain process efficiency, accepting the risk themselves.

It will depend on the sector, Kon said, with financial services and healthcare, for example, being more conservative in their approach.

AI investment is set to reach $2.52 trillion this year, with the bulk of it coming from hyperscalers, model builders, and software companies.

They will want to see a good return on the outlay.

Any senior IT manager or director will testify to the bold marketing claims of the vendors promising to automate internal decision-making at an unprecedented speed and scale.

But holding them liable for the output will remain a challenge until the law is clearer, and cases have gone through the courts.

The major application vendors were offered the opportunity to explain how much liability they accept in their customers' implementation of AI agents.

Microsoft and SAP refused to comment.

Workday, Salesforce, ServiceNow, and Oracle have not responded.

Despite the industry hype, matching market claims to legal responsibility remains a difficult circle for them to square.

®

Related Stories

Source: This article was originally published by The Register

Read Full Original Article →

Comments (0)

No comments yet. Be the first to comment!

Leave a Comment